The best engineer on your team in 2026 probably isn't the one who writes the cleanest TypeScript. It's the one who can look at a broken AI output, diagnose exactly why the model went wrong, redesign the context window that fed it, and ship a reliable system — before noon. That's not a coding skill. That's prompt architecture. And it's become the highest-leverage technical skill in software engineering. This isn't a soft claim. It's a structural shift in what engineering productivity actually means when 70-80% of the code your team ships is AI-generated. The bottleneck has moved. It's no longer "can you write this function?" It's "can you communicate intent to a model precisely enough that it produces production-ready output, at scale, reliably?" The engineers who answer yes to that question are becoming extraordinarily valuable. The ones who can't are becoming expensive bottlenecks.

The Shift Already Happened — Most Leaders Missed It

In 2024, AI coding tools were a productivity experiment. Engineers played with Copilot, dropped prompts into Claude, got impressed, moved on. The interaction model was basically: ask, get code, edit heavily, ship. In 2026, that model is dead. Companies aren't experimenting with AI in chat interfaces anymore — they're shipping GenAI systems to real users at scale. That transition changed everything about what "good engineering" looks like. When you're running a production system powered by language models, the game isn't "find a prompt that works once." The game is: control model behavior consistently, test reliability across edge cases, own outcomes when the model surprises you. That requires a fundamentally different skill set. The engineers who built their careers on knowing every nuance of a framework's API? They're still valuable. But the engineers who can architect how a model receives information — what context it sees, in what order, in what form — are the ones driving output multipliers.

The way I think about it: every company is going to need to figure out how to use AI. The companies that figure it out first are going to have a huge advantage.

— Sam Altman, CEO at OpenAI

This is exactly the leverage point. The companies figuring it out fastest aren't buying better tools. They're hiring engineers who understand how to wield those tools with precision.

Why "Prompt Engineering" Was Just the Beginning

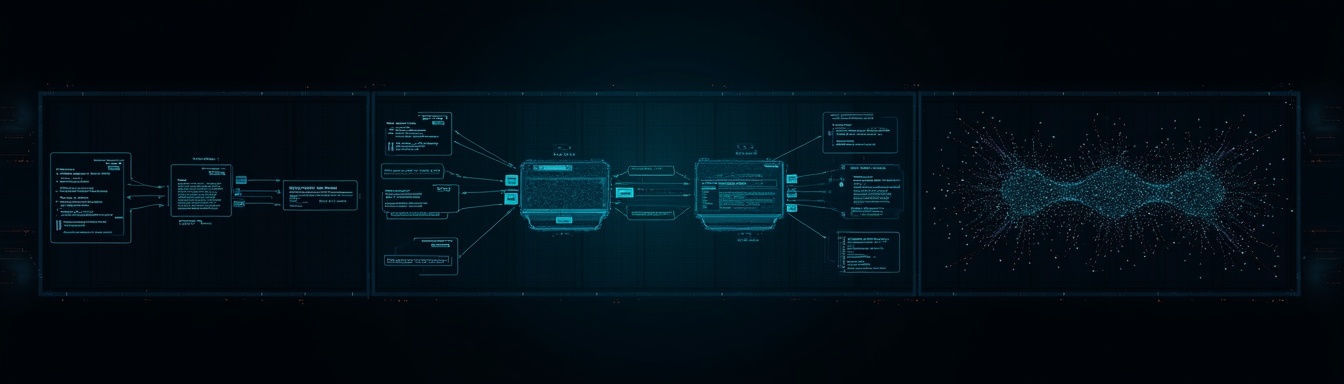

Here's where most of the conversation gets it wrong: "prompt engineering" as a standalone skill — write better sentences, get better outputs — is table stakes. The real discipline is something larger. The term that's taken hold in serious engineering circles is context engineering: the practice of designing the entire information environment that a model operates within. That includes:

- •System instructions — the persistent behavioral contracts that define how a model operates

- •Retrieval strategy — deciding what documents, embeddings, or data get pulled into context, when, and why

- •Memory management — what persists across sessions, what gets summarized, what gets discarded

- •State tracking — how the model understands where it is in a multi-step workflow

- •Schema and tool contracts — how you define the structured interfaces between model outputs and downstream systems

This is systems design. It's not "write better prompts." It's engineering the information architecture that a model reasons within. And it requires the same mental models as distributed systems design — just applied to a different kind of runtime. The engineers who get this aren't glorified chatbot users. They're designing cognitive pipelines.

The Productivity Gap Is Already Measurable

The argument for prompt architecture isn't theoretical — you can see it in output quality right now. Developers who invest in structured prompt design — establishing technical context first (language, frameworks, architecture patterns), then issuing specific instructions with clear action verbs, then defining success criteria and edge cases — produce code that works correctly on the first try and integrates with existing patterns without heavy editing. Developers who don't? They spend 40-60% of their "AI-assisted" coding time re-editing, re-prompting, and debugging model-generated code that doesn't fit the actual system. The tool is fast; the workflow is broken. The delta isn't about which model you're using. GPT-4o, Claude 3.7 Sonnet, Gemini 2.0 — they're all capable enough. The delta is whether the engineer feeding them understands how to structure context so the model can reason correctly about the specific problem at hand.

| Skill Level | First-Pass Code Quality | Integration Edits | Edge Case Coverage |

|---|---|---|---|

| Vague prompting | ~40% usable | Heavy | Minimal |

| Structured prompt design | ~75% usable | Light | Moderate |

| Full context engineering | ~90% usable | Minimal | Systematic |

These aren't published benchmarks — they're directional estimates from teams who've measured. But every engineering leader who's been paying attention has seen this pattern play out on their own teams.

The Strongest Counterargument — And Why It Actually Strengthens the Thesis

Here's the honest pushback: prompt engineering as a standalone role doesn't integrate cleanly into product teams. Companies that tried to hire "prompt engineers" in isolation found them disconnected from the system context needed to actually improve outputs. The work expanded into QA, testing, model safety, and evaluation — and those functions need to live inside engineering teams, not alongside them. That's a fair critique of the framing. But it doesn't undermine the thesis — it sharpens it. The point was never "hire a prompt wizard." The point is that every strong engineer in 2026 needs context engineering as a core competency, integrated into how they approach system design. The same way every strong engineer needed to understand databases, or networking, or concurrency — not as specialists, but as practitioners. The engineers who treat prompting as a layer on top of their existing work, rather than a first-class design problem, are the ones shipping fragile AI features that behave unpredictably in production. The engineers who bring context architecture into their design process from day one are the ones shipping reliable systems. It's not a new role. It's a new requirement for the role.

What This Means for Hiring

If context engineering is now a core engineering competency, your hiring process needs to surface it. Most hiring processes don't. Traditional technical interviews test: data structures, algorithms, system design, code review. None of those surfaces how a candidate thinks about model behavior, context window design, or retrieval strategy. You can ace every LeetCode problem and still be completely lost when asked to debug why a RAG pipeline is returning hallucinated citations. The engineers you need in 2026 can answer questions like:

- •"Walk me through how you'd design the context window for a multi-step code review agent."

- •"What breaks in a retrieval system when the chunk size is wrong?"

- •"How do you test model behavior systematically across edge cases?"

These are real engineering questions. They're not soft skills. And they require a different kind of hire — an AI-native engineer who's internalized how models actually work, not just how to paste code into a chat window. This is exactly the gap that traditional hiring platforms were built before they needed to care about. LinkedIn surfaces years of experience and framework keywords. Toptal and similar platforms screen for clean code under pressure. Neither tells you whether a candidate can architect a context system that behaves reliably when a user does something unexpected. Finding AI-native engineers — ones who bring context engineering fluency as a native skill, not a recent add-on — is harder than it looks. The signals are different. The interviews need to be different. The job descriptions need to be different. Nextdev is built to surface this specific class of engineer, because the old playbooks don't work for this hiring problem.

What Engineering Leaders Should Do This Quarter

Audit your current team's context engineering fluency. Run a structured exercise: give your engineers a broken AI feature and ask them to diagnose it at the context level. Who thinks in terms of retrieval? Memory? System instructions? Who jumps straight to "the model is wrong"? That gap tells you exactly where your training investment should go.

Add context architecture to your design review process. Every AI feature should have a documented context design — what the model sees, what it doesn't, why. Treat this like you'd treat a schema migration: it needs review, versioning, and explicit ownership.

Rewrite your technical interview rubric. Include at least one question that evaluates how a candidate thinks about model behavior and context design. Not "do you use Cursor?" — but "describe a time you had to debug unreliable model output. What was the root cause?"

Invest in shared prompt and context patterns. The best teams are building internal libraries of context templates, system instruction patterns, and retrieval strategies the same way they've always built shared component libraries. This is engineering infrastructure. Treat it that way.

Hire for AI-native, not AI-adjacent. The engineer who "learned to use Copilot last year" is not the same as an engineer who has shipped production RAG systems, designed multi-agent workflows, or built systematic evaluation pipelines. Be precise about what you actually need — and use hiring processes that can tell the difference.

The Competitive Window Is Closing

The teams building this competency in 2026 are pulling ahead in ways that compound. Not because they write less code, but because they ship more reliable AI-powered systems, faster, with smaller teams. A five-person team that truly understands context engineering can own a product surface that would have taken twenty engineers two years ago.

Software is becoming an AI-first discipline. The question isn't whether to adapt — it's how fast.

— Satya Nadella, CEO at Microsoft

The engineers who can architect the context that makes AI systems behave are the force multipliers. The companies hiring them aggressively right now are making a bet that will pay out for years. The bottleneck in software engineering has shifted. It's no longer writing code. It's communicating intent to systems that can write code — with enough precision, structure, and architectural thinking that the output is trustworthy at scale. That's the skill. Find the engineers who have it.

Want to supercharge your dev team with vetted AI talent?

Join founders using Nextdev's AI vetting to build stronger teams, deliver faster, and stay ahead of the competition.

Read More Blog Posts

Toptal vs Nextdev: Which Wins for AI Teams?

Toptal was the right answer in 2015. When your alternative was sifting through Upwork listings or posting on Craigslist, a curated network with rigorous vetting

AI Tools Weekly: Claude Code Gets 1M Context — Free

TL;DR: Claude Code shipped two meaningful releases this week. v2.1.75 unlocked a 1M token context window for Opus 4.6 at no long-context premium on Max, Team, a